Digesting Research: Redesigning Data Systems to be Agent-First

By Shu Liu et. al. Published on Arxiv.

Workload Requirements

While LLM Agents may match human reasoning capabilities, they won’t possess awareness of the underlying data and characteristics of the data systems on which the data is stored. They can make up for this by tirelessly exploring, issueing hundreds or thousands or requests a second. These are not really attempts at a solution, but merely intended to gather information. This is referred to as Agent Speculation, or Agent Grounding.

Present day system workloads are much more intermittent, or more targeted. Many of these agent queries are likely wasteful. How should data systems evolve to better support these agentic workloads?

Empirically, it is expected that agentic workloads:

- Demand High throughput

- heterogeneous, as explorative research often is

- containing lots of redundant requests. Many requests may access similar data or perform overlapping operations.

- steerable: speculation is fundamentally exploratory, and might thus be better served by allowing direct communication between data systems and LLM agents.

The authors loaded the BIRD text2SQL benchmark into a variety of backends, and employed QWEN2.5-Coder-7B-Instruct, as well as GPT-4o-mini. Their demonstration continues to showcase that:

- The success rate of agentic workloads increases as a function of requests: 14% - 70%

- Across queries, the number of distinct sub-plans of each size is often a small fraction of less than 10%-20% of the total. Representing considerable potential for sharing computation.

- Requests from agents vary greatly in the information necessary, from coarse-grained exploration of metadata and data statistics, to partial or more complete attempts at addressing the task. Coarse-grained, exploratory requests typically happen early on.

- Speculation traces can become much more efficient, reducing queries by >20%, depending on phase - if proactively provided grounding pertinent to the task.

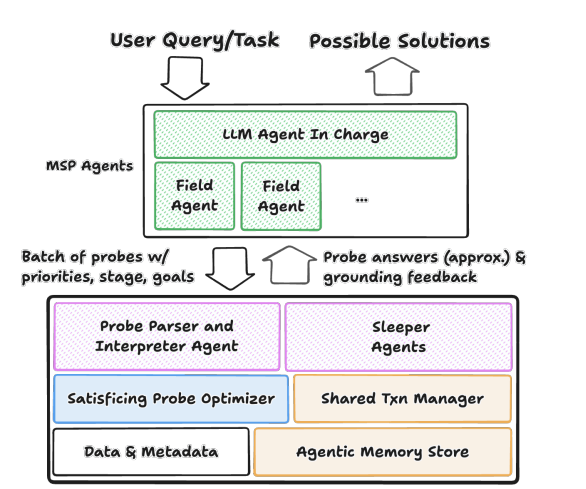

Agent-First Data System Architectures

The initial requests coming from the agent can be more seen as probes, rather than direct queries for information. At this stage, it’s more about grounding, metadata information collection, or solution formulation. They resemble a data engineer looking through the information_schema in order to find interesting tables, views and columns that might satisfy a certain query.

Elevating these first entrypoints into the data system as probes, allows us to better tailor a response to them. The system could provide answers, approximations, and proactively provide information going beyond answers to help steer agents through grounding feedback.

The paper goes as far as separating this out into a proper server-side agent, with its own memory store. It also defines another agent, the shared transaction manager, to handle updates of the first’s knowledge base. Then it continues with further suggestions to set up such a system that answers probing queries from outside LLMs.

Conclusion

An interesting problem, with potentially lots of solutions, but the core of thinking is maybe the most interesting: solving LLM Agent overloads by better answering their probing queries.

You can also already see some of the upcoming MCP disadvantages, as well as big tech revenue models.