Digesting Research: Are Agents.md Files Actually Useful?

Why This Matters Beyond Engineering

As organizations scale AI-assisted development, every unnecessary token is a cost multiplier. This research shows that naively adding context files to AI agent workflows can increase inference costs by over 20% without improving task success rates, and sometimes reducing them. For engineering leaders managing AI tooling budgets, this is a concrete example of where more is not better. The lesson generalizes beyond context files: evaluate AI productivity investments with the same rigor you’d apply to any other technology spend. Measure actual outcomes, not assumed ones.

Note: this blog refers to context files as Agents.md, but there are many versions like: Claude.md, copilot_instructions.md

The Agents.md craze has gotten a bit out of hand. Everywhere you look, there’s someone on LinkedIn proudly posting their latest agent instructions with inspirational captions and a tone suspiciously close to AA‑meeting affirmations. It’s like they’ve achieved spiritual enlightenment! Entertaining, for sure, but it also highlights how much hype, noise, and mixed messaging there is around these files.

What’s missing is actual evidence. And that’s exactly what this paper set out to provide. It evaluates their impact on task‑completion performance using established SWE‑bench tasks across popular repositories, and as well as a new dataset of real-world issues pulled from repos where developers already commit their own context files.

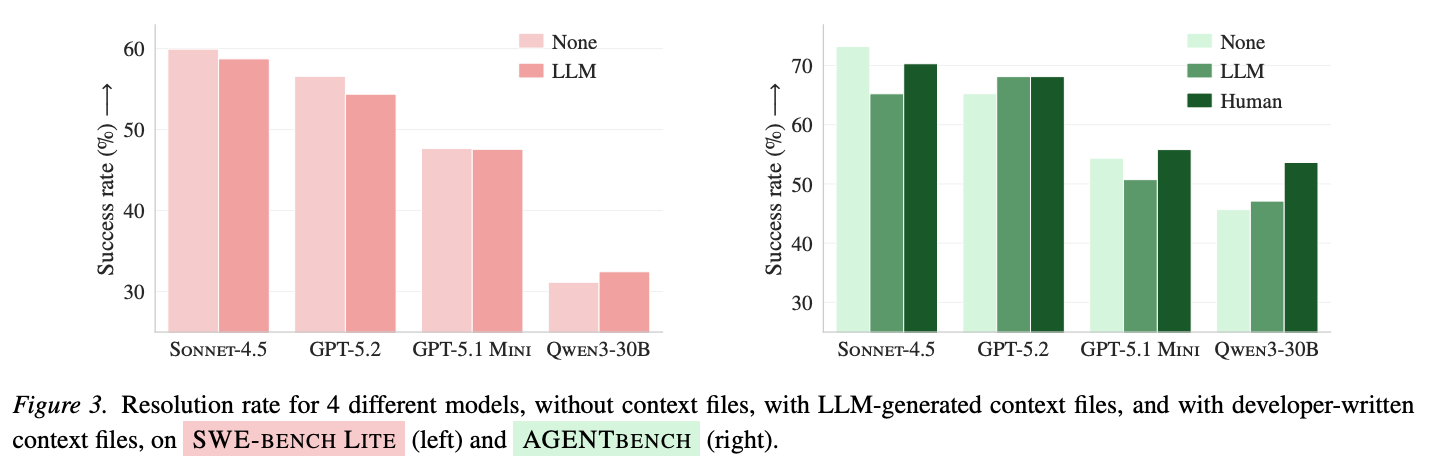

The result: context files tend to reduce task success rates while increasing inference cost by over 20% when generated by LLMs. Human-generated more mild effect

Caveat: this concerns mostly older models: Claude Sonnet-4.5, OpenAI GPT-5.2 and GPT-5.1 as well as Qwen3-30B.

There are some nuances there though. LLM-generated context files tend to perform the worst, whereas developer-written context files tend to slightly improve agent performance. Nonetheless, after looking at the agent traces the authors concluded that context files lead to more thorough testing and exploration by coding agents.

What’s in an Agents.md?

An Agents.md file usually sits at the root of the repository, or at least somewhere so that an AI agent can reliably discover it. In practice, these files tend to include:

- Repository structure: A high‑level map of how the project is organized, where important modules live, and how components relate to each other.

- Developer tooling & workflows: Information about build systems, testing frameworks, linters, CLI tools, or any custom scripts the agent should be aware of.

- Style guides & conventions: Coding standards, naming conventions, review expectations, formatting rules—essentially the project’s internal etiquette.

- Design patterns & architectural guardrails: The principles the codebase follows (or must follow): preferred patterns, layering rules, abstraction boundaries, and the “don’ts” that keep the system maintainable.

In short: an Agents.md is a distilled form of institutional knowledge (part manual, part onboarding doc, part guardrail system) written for machines rather than humans.

Curious to read more, or dig through some examples: more information here.

Experiment

For 4 different coding agents (Claude Sonnet-4.5, OpenAI GPT-5.2 and GPT-5.1 as well as Qwen3-30B), three different context file settings were considered:

- None: no context file available

- LLM: an LLM-generated context file

- Human: a developer-provided context file

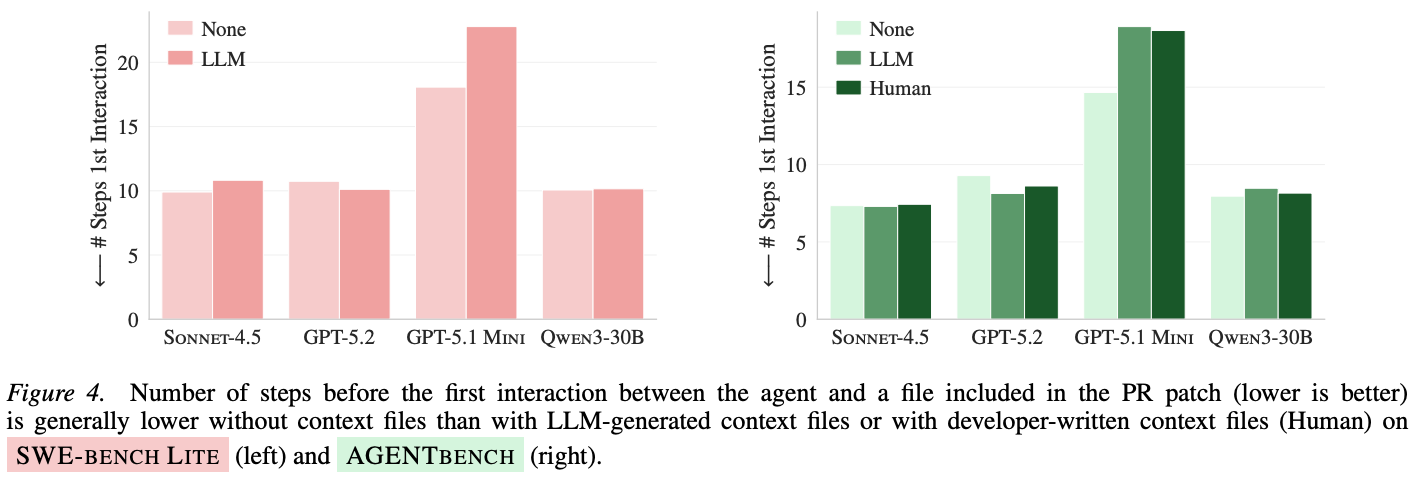

The experiment result is positive if the agent produces a patch that leads to all test passing. Next to that, the number of steps the agent requires to complete the task is considered.

Observations

Human context files improve the performance of the agent

Context files also tend to increase agent costs

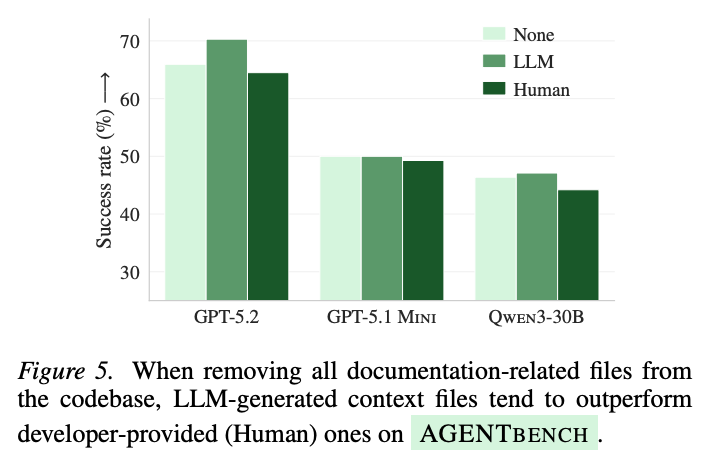

Context files are redundant with existing documentation, and vica versa

Removing the documentation from a repository and adding just information for the agent seems to lead to better results. This also may explain some of the earlier findings.

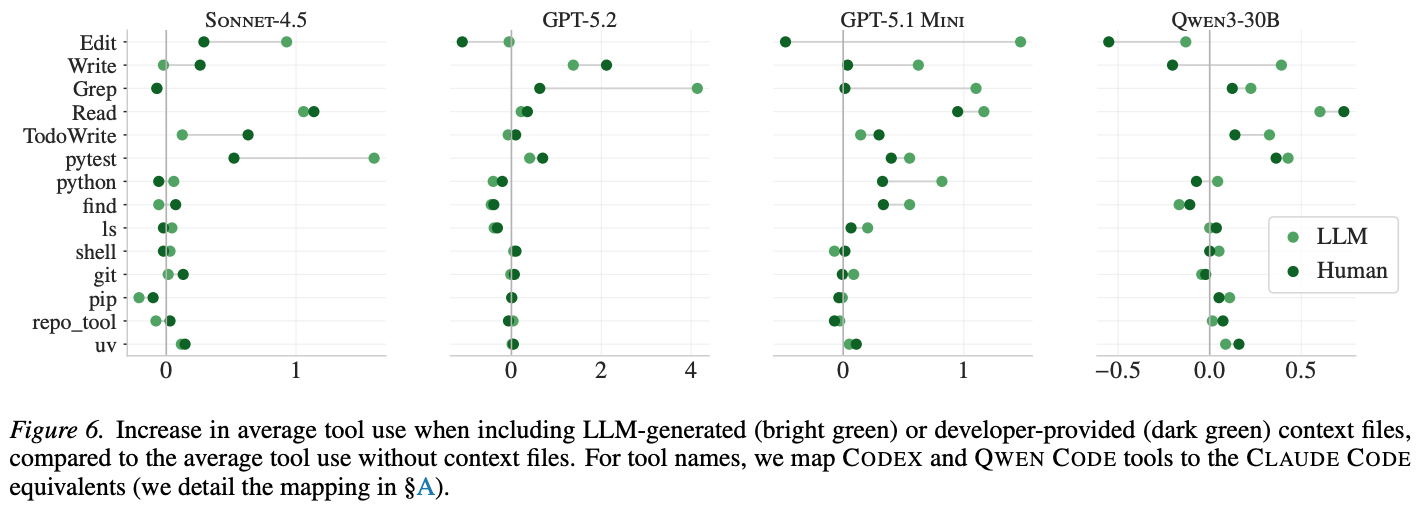

Context files lead to more testing and exploration

There is an increase in average tool use when including LLM-generated or developer-provided context values. Next to that, behind these findings it also becomes clear that context files are typically followed: mentioned tools are used much more per instance on average when mentioned in the context files (with some up to 2.5 times more as compared to fewer than 0.05 times). In other words, you have to tell agents what tools to use in the developer process.

Other Findings

- Stronger models don’t generate better context files

- Sensitivity to different LLM-generated- prompts is generally small

Caveats

- Focus on Python!

Conclusion

The verdict is simple: context files aren’t a cheat code. Developer-written ones can help, LLM-written ones usually don’t, and all of them make agents work harder. Use them sparingly, intentionally, and only when they add clarity. Try to read through the hype!