Digesting Research: F3: The Future-Proof File Format

This is mostly objective summary of the paper F3: The Open-Source Data File Format for the Future.

Why this is interesting

In the majority of practical work, Parquet is seen as the undeniable standard to move towards to! Most data platform environments in the cloud have already been moving to Apache Ieberg or Databricks Delta. Nonetheless, Parquet remains mysterious for unexperienced data engineers and data scientists who are more used to csv’s. As such, being critical of Parquet is uncommon by most practicioners in the field of Data & AI.

Some of the writers of this particular paper really are heavyweights in the field:

- Wes McKinney: the creator of Pandas, as well as co-author of a host of other major data format tools such as: Ibis, Arrow, Parquet and Feather. This is one of his particular known blog posts.

- Andrew Pavlo: a celebrated professor in “Databaseology”. See for example this talk and close collaborator of Stonebraker, who in turn was the creator of Postgres. They have some fascinating research, here and here

Also, like all my blogposts, I did not write a single letter using AI. I did use AI to easier digest some sections of these papers.

F3: Future-Proof File Format

If you have been working around data platforms for a while, you probably have run across Parquet and ORC formats. You might have looked under the hood, and you might have wondered if these file formats are useful. Surely they are more useful than storing plain JSONs, CSVs or plain text? Perhaps there are not many other options, or perhaps you have been locked in by Enterprise Architecture decisions or a third-party vendor.

Well, since the inception of these data formats, a lot has happened. The cloud started to take shape, and with that the ubiquity of cheap, fast storage as well as more performant networking (3G, 4G, 5G…). What did not improve significantly is computational performance (CPUs). In other words, Parquet and ORC have ben designed based on outdated assumptions about relative hardware performance as well as workload access patterns.

This is further exacerbated by the rise of high-bandwith, high latency cloud data lakes, which often causes systems to be bottlenecked by compute rather than I/O. The diversity of data has also continuously been increasing, like for example the ML wide tables containing features, high-dimensional vector embeddings or large unstructured blobs. The existing formats are not suited for these usage patterns and tend to be woefully inefficient for them.

There are some rcent advancements in compression, indexing and filtering to adress these deficiencies, but existing file formats are not designed to be easily extensible. Furthermore, there is often also not one single implementation. Parquet and ORC have several across various different languages (Go, Rust, Python, etc…). Furthermore, a system may be unable to decipher a newer file’s contents if it uses an older version of the format’s library. Thereby shifting the complexity behind different file formats away from the format and to the system, and often forcing the system to only support the lowest denominator of features. E.g. dragging along legacy implementations.

There are efforts to create new formats (Meta Nimble, Lance, TSFile, Bullion, BtrBlocks, etc), but they do not really address these underlying issues. How does F3 do this?

- F3 contains (1) the metadata of a file’s contents, (2) the physicial grouping layout of encoded data in a file, and (3) the API to access data that is agnostic to a data’s encoding scheme.

- The first two adress efficiency improvements that the paper deems improvements for Parquet and ORC.

- The third addressess interoperability and extensibility by exposing a public API that defines how implementations decode compressed data in the file. The encoding methods thereby become plug-ins installed and upgraded separately from the core library. Next to that, to ensure that any library version can read any file, F3 embeds a decoder implementation as a WebAssembly binary inside the file!

In other words, every F3 file contains both data and the code to read that data! This future proofs F3, avoids the earlier described problems and enables faster evolution than existing solutions. E.g. developers can deploy future encoding methods to production systems by including the Wasm code in the files without worrying about upgrading library versions across their entire fleet.

Thereby, the paper makes the following three contributions:

- The introduction of F3

- The proposal of a plug-in-based decoding API that allows Wasm binaries of the decoding implementation in the file

- Evaluate performance of F3 layout design considerations and the Wasm-embedding mechanism on real-world data sets.

F3

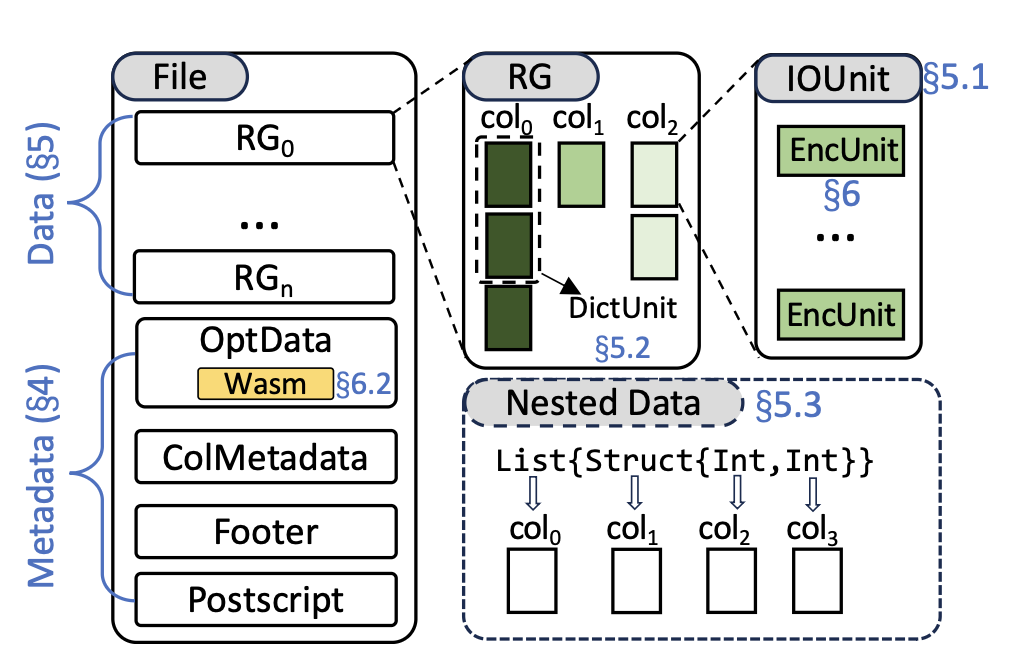

So let’s dive in, how does F3 look like exactly. The paper presents the following overview.

At a high level, F3 adopts the same PAX (Partition Attributes Across) layout. Meaning that the data is partitioned horizontally into row groups, which are then stored column by column. Each column generally is called a column chunk, which are called either pages or compression chunks within Parquet. The page is the fundamental unit for encoding, compression and data skipping for Parquet.

F3 does this too, but distinguishes itself in a few ways.

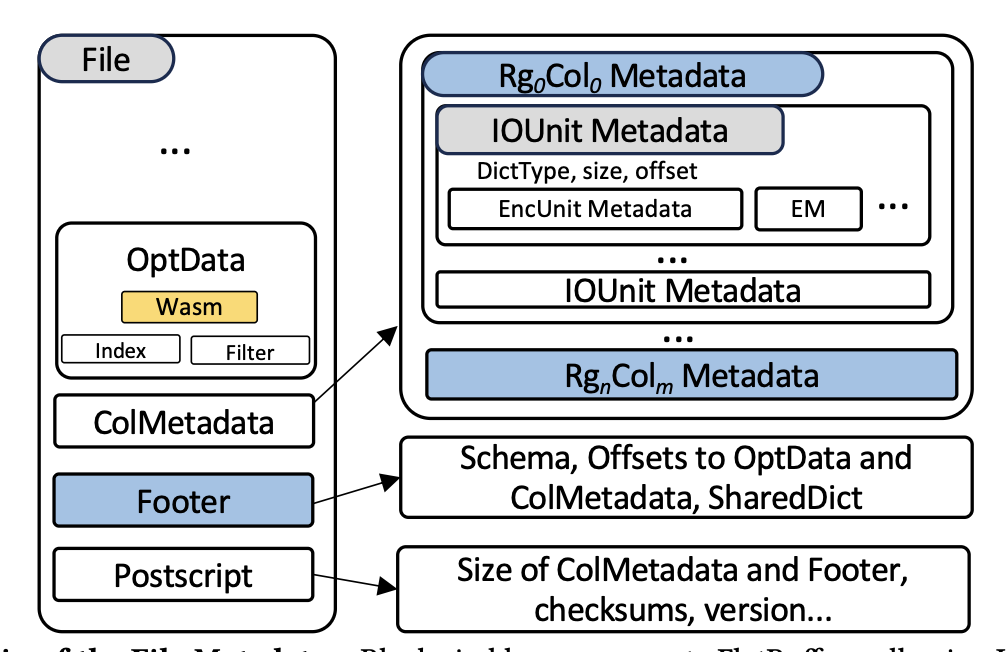

First of all by eliminating the deserialization overhead from which Parquet and ORC suffer. That is, workloads that lead to wide tables with lots of columns might require only a subset of these columns. However, the metadata parsing protocols within Parquet and ORC do not support random access, and instead require deserialization of the entire metadata. This process can sometimes even be compared to actually reading the data columns! This is stored centrally in the ColMetadata. Furthermore, column-level metadata is further aggregated into the Footer and there is also a PostScript that mostly collects sizes in order to advise on data retrieval strategies. All this leads to an additional lowering of I/O requirements.

Second, unlike Parquet, F3 decouples the physical I/O Unit from the logical row group such that the file writer can tune the size independently according to different storage media. Within the Parquet the concept of a Row Group is highly overloaded and tied to the I/O granularity of the file. Since the file writer cannot flush a partial column chunk to disk, it must buffer the entire row group before writing. Next to that, the size of a column chunk can vary drastically (50KB vs 50MB), requiring the readers to implement another I/O coalescing layer to achieve optimal retrieval speed on cloud storage. Note that Databricks’ Delta format does address some of the same problems, but not really as another I/O coalescing layer. Merely, it optimizes the use of Parquet overall without touching things like buffering/caching while reading the data directly.

So in F3 a row group merely represents a logical horizontal partition to facilitate the semantic grouping of data. F3 sets a size for the IOUnit (default 8 MB, which is optimal for cloud storage) and can simply flush whenever that capacity it reached. As such, it does not need to buffer and wait for the entire column chunk to be retrieved.

Note: large column chunks are a common reason for OOM failures in Parquet writers. As such, it also adds stability for wide table writes!

Thirdly, F3 decouples the dictionary-encoding scope from the logical row group. This allows thefile writer to determine the most effective scopes for dictionary encoding for each individual column to achieve the best compression. Dictionary encoding maps values in a column to integer (dictionary codes), which can further be encoded using RLE, bitpacking, etc. They are actually one of the most widely used and effective lightweight encodings in columnar formats! In Parquet and ORC, the scope of each dictionary is fixed to align with the corresponding row group, meaning that each column chunk must have exactly one dictionary. However, different column chunks could benefit from different dictionary scopes to achieve better compression! The paper then shows an example with different columns that could benefit from different dictionary encoding strategies. Some from incredible local scopes (up to 64K-row blocks), some favor global dictionaries like Parquet and ORC offer.

Finally, each IOUnit consits of one or multiple encoding units, which stores the data using the same encoding algorithm and serves as a minimal byte buffer during encoding and decoding. The paper provides rust-style pseudocode to explain the expected methods of such an encoding unit. It also provides a couple of extension points to the design. The decoding kernels, required to read the file, are then stored within the file to ensure that any system has the necessary logic to access the file’s data. Wasm was chosen because of its portability and performance. Note that it runs nearly on all modern hardware and platforms while remaining language-agnostic. This makes an F3-file self-contained! No additional libraries to install, just to read legacy data.

It is important to mention that there is a slight speed tradeoff of using Wasm over native decoding

F3 Evaluations

Is F3 the only upcoming format? No there are more, and some have a lot of momentum behind them too!

The authors continue to compare F3 to Parquet, ORC, Lance, Vortex and Nimble.

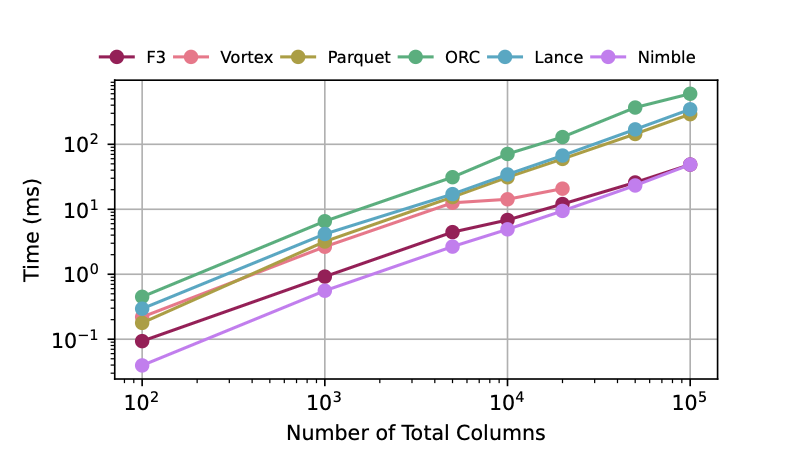

Metadata Overhead

There is always overhead in the footer, and those size proportional to the number of columns. F3 performs well, only slight slower than Nimble due to FlatBuffer-required verifications. These verifications are necessary to prevent varies issues when random-accessing a FlatBuffer. Without the verification, even Nimble is outperformed.

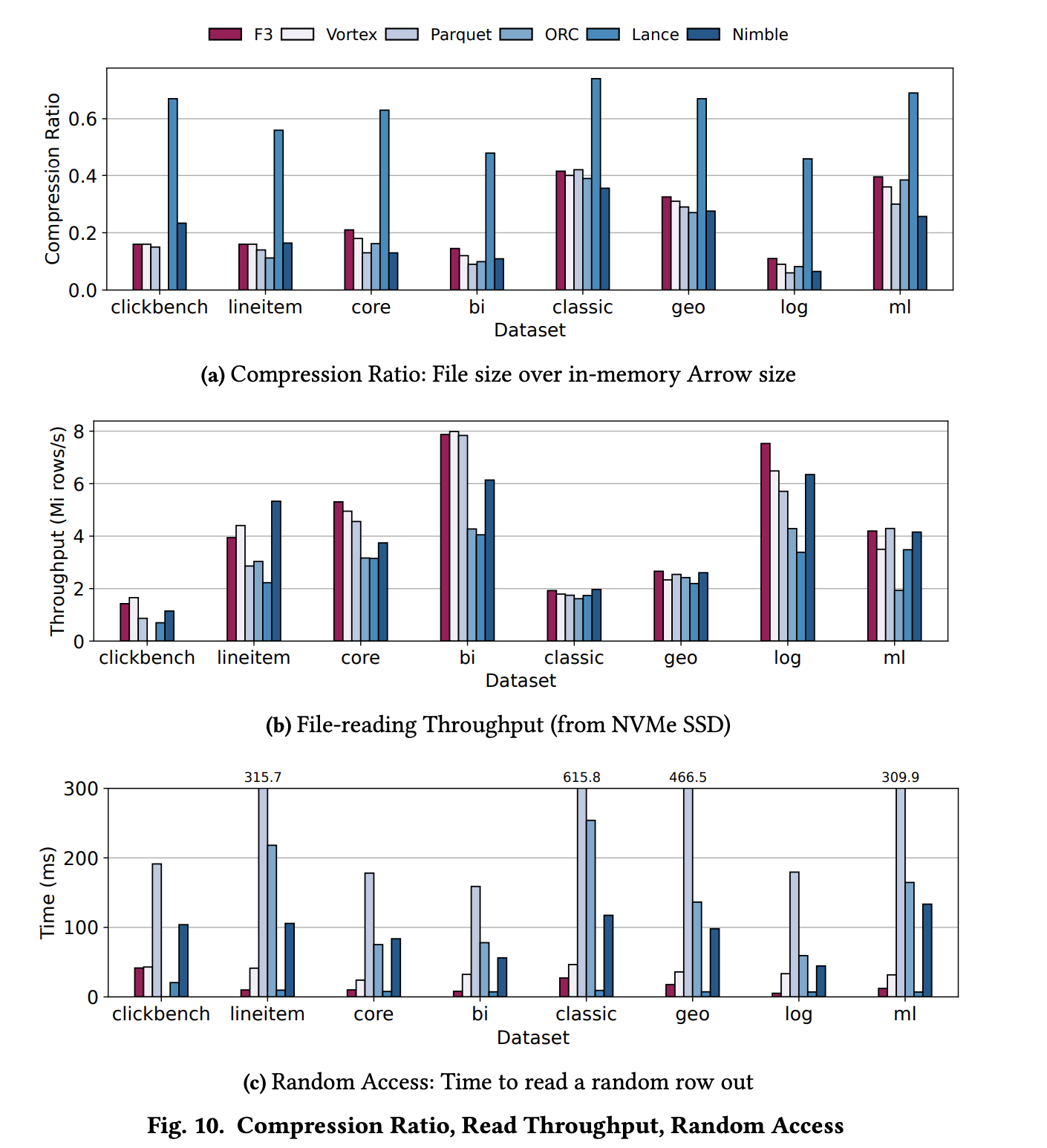

Compression Ratio and Read Throughput

F3 achieves comparable or superior compression throughput to Parquet, albeit with a slightly worse compression ratio. This verifies that F3 has not sacrified efficiency, as compared to other formats, for its extensibility benefits.

Random Access

F3 comes in second after Lance, who does not have cascading encoding or compression like the others, enabling direct offset calculation for certain types (e.g., integers) and minimizing read amplification. Vortex is slower than F3 because it has a relatively large prefetch of its footer despite accessing a single row3. Parquet incurs the highest latency as it requires decoding the entire row group to access a single row. ORC and Nimble employ row indexes to skip portions of a row group, but they still need to fully decode an Encoding Unit to random access the data.

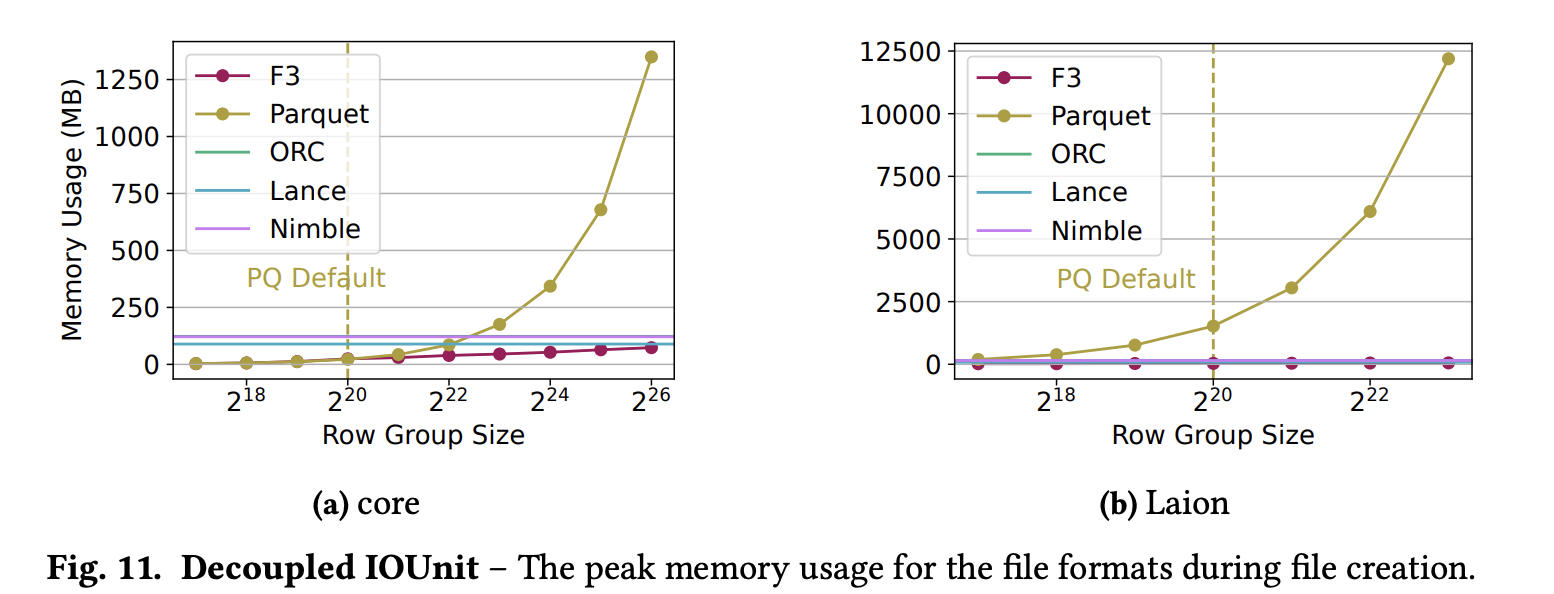

Decoupled IOUnit

Parquet’s memory usage grows proportionally to the row group size because it must buffer the whole row group before writing to disk. This also results in high memory usage, because the flushing buffer size is aligning with the row group size. F3, and Lance, flush an IOUnit as long as the column buffers enough data that. As a result, writing is a low memory operation. F3 thus effectively decouples the logical row group partitioning parameter from the physical I/O unit size, enabling independent tuning and resulting in consistent write memory consumption and predictable read chunk sizes across heterogeneous datasets.

Nimble and ORC manage write memory by limiting the physical size of a row group. For wider tables this might yield row groups with a smaller number of rows, which in turn negatively impacts read performance.

Flexible Dictionary Scopes

As expected, more flexible Dictionary scopes yield overall better compression ratios. However, there’s a tradeoff between encoding time and compression ratio. The results here remain a bit inconclusive, since its a new lever that would become available to formats, it’s something that would require further research to get right.

WASM Decoder

There is considerable techical depth in this part of the proposed solution, making it hard to to more than regurgitate the paper itself. It is mentioned that the storage overhead is insignificant, whereas future potential for extensibility is significant.

Note that Wasm-driven decoding kernels to exhibit a trade-off compared to native implementations, as they are closer to the hardware generally. However, in most cases the authors expect the slowdown to be marginal compared to the opportunity gains from extensibility and backwards compatibility.

Next to that, it’s important to note that the embedded WASM decoder is not necessarily used in all cases. If a native decoder is available that can decode the underlying data faster, generally that should be used. Having the decoder present would merely act as a backu.

Addendum

It is interesting to note that before the F3 format was released, there even was a plan to establish a consortium between Carnegie Mellon University, Tsinghua University, Zuckerberg’s Meta, the dutch CWI (Centrum for Wiskunde & Informatica, who has Snowflake co-founder Marcin Zukowski among its PhD graduates), VoltronData, Nvidia and SpiralDB. That fell through due to Meta’s NDAs around their preview of Velox Nimble, and then everybody involved released its own format:

- Meta’s Nimble: https://github.com/facebookincubator/nimble

- CWI’s FastLanes: https://github.com/cwida/FastLanes

- SpiralDB’s Vortex: https://vortex.dev

- CMU + Tsinghua F3: https://github.com/future-file-format/f3